What Is Prompt Poaching? A Guide for Security Leaders

Most security teams worry about employees pasting sensitive data into AI tools, but there's a less obvious and visible threat that doesn't require employees to make a mistake. A growing attack technique called "prompt poaching" allows browser extensions to silently intercept and exfiltrate conversations with AI chatbots: every question asked, every piece of code pasted, and every confidential document summarized.

In January 2026, researchers discovered two malicious Chrome extensions with a combined 900,000 users harvesting ChatGPT and DeepSeek conversations. A separate investigation found that a popular VPN extension with over 6 million users had been doing the same since July 2025, along with seven other extensions from the same publisher.

Prompt Poaching, Defined

Prompt poaching is an attack method where browser extensions capture and exfiltrate conversations users have with AI chatbots. Unlike prompt injection, which manipulates AI behavior, prompt poaching targets the conversations themselves, intercepting data before it reaches the AI provider's servers or after responses are returned.

What makes prompt poaching particularly problematic is its invisibility. There's no error message, no warning, no indication that anything is wrong. The extension simply listens, captures, and exfiltrates while the user continues their conversation completely unaware of what’s happening.

How Prompt Poaching Works

The attack typically follows a consistent pattern. A user installs what appears to be a legitimate extension, such as a VPN, ad blocker, or AI sidebar tool. The extension has strong reviews and may even carry a "featured" badge from Google, meaning it passed manual review. From there:

1. The extension monitors browser tabs continuously in the background

2. When the user visits ChatGPT, Claude, or other AI platforms, scripts are injected

3. These scripts override browser APIs, intercepting network requests and responses

4. Every prompt submitted and response received passes through the extension

5. Data is packaged, compressed, and sent to external servers every 30 minutes

6. The harvested data flows to data brokers or is sold for espionage, phishing, etc

Naturally, some extensions may use different exfiltration intervals, target different AI platforms, or employ alternative methods like DOM scraping instead of API hijacking, but the core pattern remains the same: the silent interception and exfiltration of AI conversations.

Examples of Prompt Poaching

To date, prompt poaching has already affected millions of users across multiple extension families. Here are three documented cases that show how the attack operates in practice.

Fake AI Sidebar Extensions (January 2026)

Researchers at OX Security discovered two Chrome extensions impersonating a legitimate AI sidebar tool. One had 600,000 users and carried Google's "featured" badge, while the other had 300,000 users. Both requested permission to collect "anonymous, non-identifiable analytics" while actually exfiltrating complete conversation content from ChatGPT and DeepSeek sessions every 30 minutes.

VPN Extension Harvesting (July 2025)

A popular VPN extension with over 6 million users and a 4.7-star rating had been harvesting AI conversations from July 2025, according to research from Koi Security. The extension marketed itself as a privacy tool, but an update silently added code that captured every prompt and response from eight AI platforms. The same harvesting code appeared in seven other extensions from the same publisher, including ad blockers and browser security tools, with the data flowing to an affiliated data broker and sold for marketing analytics.

Legitimate Analytics Tools (January 2026)

Secure Annex researchers identified legitimate browser extensions engaging in prompt poaching. One well-known web analytics tool with over 1 million users updated its privacy policy in January 2026 to explicitly state it collects prompts, queries, uploaded files, and AI outputs, using the same technical approach as the malicious extensions.

Why Traditional Security Misses It

Prompt poaching exploits several blind spots in traditional security models. Extensions operate in trusted space, running with user-granted permissions inside the browser itself. Endpoint detection tools are designed to catch malware, not productivity tools with "featured" badges and thousands of positive reviews.

The exfiltration traffic appears completely legitimate, using standard HTTPS connections that look indistinguishable from the thousands of other SaaS tools employees use daily. Network monitoring sees encrypted traffic to unfamiliar domains, but that's true of most modern web applications.

Auto-updates make the problem worse. Chrome and Edge extensions update silently by default, meaning users who installed a clean extension months ago can wake up with new code harvesting their conversations. Publishers can add harvesting capabilities at any time without notification or consent.

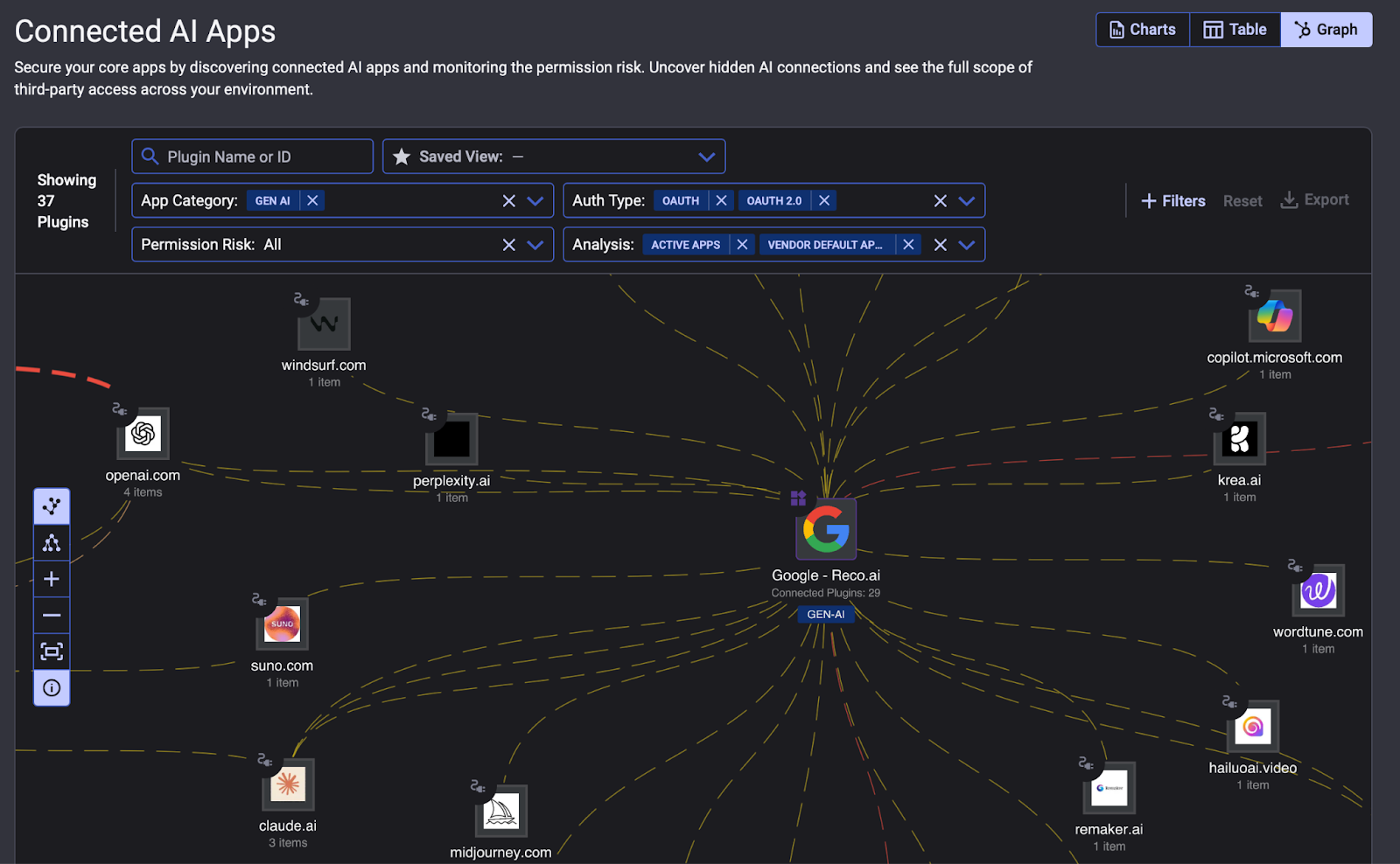

Closing the Visibility Gap with Reco

The core challenge with prompt poaching is visibility. Traditional security tools weren't built to track how employees interact with AI across your environment. Reco's Dynamic SaaS Security platform changes that.

Reco's Knowledge Graph maps every user, application, permission, data flow, and AI connection across your SaaS ecosystem in real time. When a prompt poaching incident surfaces, security teams don't have to guess at the damage. They can see exactly which users were exposed, what data was reachable through those sessions, and where to focus remediation. An invisible threat becomes a scoped, manageable incident.

But visibility only matters if it can keep pace with how fast your environment changes. Reco's SaaS App Factory™ onboards new applications in days rather than quarters, with over 200 integrations available today. As new AI tools, shadow applications, or third-party connections appear, they're automatically mapped into the same unified view, closing security gaps before attackers can exploit them.

The result: continuous AI governance protecting over 2 million users worldwide, giving security teams the confidence to embrace AI without losing control over where sensitive data flows.

Learn more at Agentic AI Security for SaaS | Stop Shadow AI with Reco.

Gal Nakash

ABOUT THE AUTHOR

Gal is the Cofounder & CPO of Reco. Gal is a former Lieutenant Colonel in the Israeli Prime Minister's Office. He is a tech enthusiast, with a background of Security Researcher and Hacker. Gal has led teams in multiple cybersecurity areas with an expertise in the human element.

Gal is the Cofounder & CPO of Reco. Gal is a former Lieutenant Colonel in the Israeli Prime Minister's Office. He is a tech enthusiast, with a background of Security Researcher and Hacker. Gal has led teams in multiple cybersecurity areas with an expertise in the human element.

%201.svg)

%201.svg)

.svg)