8 Best AI Agent Security Tools for CISOs to Consider in 2026

AI agents are quickly moving from experimental assistants to active participants in enterprise environments. They can interact with SaaS applications, call APIs, retrieve sensitive data, and trigger automated workflows with minimal human involvement. While these capabilities improve efficiency, they also introduce a new security challenge. Autonomous agents can inherit permissions, access multiple systems, and execute chained actions across cloud and SaaS environments.

Traditional security tools were not designed to monitor or govern this level of automated decision-making. As organizations deploy more agent-driven workflows, security teams need specialized platforms that can discover AI agents, monitor their activity, and control how they interact with data, applications, and identities.

8 Best AI Agent Security Tools for CISOs

The following platforms help security teams discover, monitor, and govern AI agents operating across enterprise environments.

1. Reco

Reco is a SaaS security platform that helps organizations discover and monitor applications, integrations, and automated workflows operating across SaaS environments. It provides visibility into how identities, including service accounts and automated processes, interact with SaaS applications and APIs. By analyzing activity across connected SaaS platforms, Reco helps security teams detect excessive permissions, risky integrations, and abnormal access patterns that could expose sensitive data.

Key capabilities

- SaaS application and integration discovery

- Identity and access governance across SaaS environments

- Detection of risky integrations and abnormal activity

Best for: CISOs seeking visibility and control over SaaS integrations, identities, and automated workflows.

2. Microsoft Defender for AI

Microsoft Defender provides security capabilities for AI workloads through services such as Defender for Cloud and Defender XDR. The platform helps organizations discover AI agents, monitor their activity, and identify misconfigurations or vulnerabilities affecting AI workloads. Security alerts generated from AI environments can be correlated with other signals across identities, endpoints, and cloud infrastructure.

Key capabilities

- Discovery and monitoring of AI applications and agents

- Threat detection for generative AI workloads

- Correlated security alerts across cloud and identity environments

Best for: Organizations using the Microsoft security ecosystem that want integrated monitoring for AI workloads.

3. Palo Alto Networks AI Security

Palo Alto Networks provides AI security through Prisma Cloud AI Security Posture Management (AI-SPM). The platform gives organizations visibility into AI pipelines, models, and infrastructure across cloud environments. It continuously analyzes AI environments to detect misconfigurations, excessive access, and security risks affecting AI systems.

Key capabilities

- AI asset discovery across models, services, and infrastructure

- AI pipeline and configuration risk analysis

- Attack path analysis across cloud environments

Best for: Enterprises running AI workloads in cloud environments.

4. Wiz AI Security

Wiz provides AI security capabilities through AI Security Posture Management (AI-SPM). The platform discovers AI assets such as models, SDKs, and AI services and maps how they interact with infrastructure and data. Wiz continuously analyzes AI environments to identify misconfigurations, exposed endpoints, and risky access paths that could lead to security incidents.

Key capabilities

- AI asset inventory and discovery

- Detection of AI misconfigurations and vulnerabilities

- Attack path analysis across AI environments

Best for: Cloud-first organizations that need visibility into AI pipelines and infrastructure.

5. Lacework AI Security

Lacework technology now forms part of Fortinet’s FortiCNAPP platform, which provides cloud-native security across workloads and infrastructure. The platform uses behavioral analytics and automated monitoring to detect anomalies, suspicious activity, and misconfigurations in cloud environments. While not designed specifically for AI agents, it helps secure the cloud infrastructure where AI systems and automated services operate.

Key capabilities

- Behavioral analytics for cloud activity monitoring

- Detection of misconfigurations and vulnerabilities

- Runtime protection for cloud workloads and containers

Best for: Organizations securing cloud infrastructure that hosts AI workloads.

6. Protect AI

Protect AI, now part of Palo Alto Networks, is a security platform designed to secure machine learning models and AI applications across the development lifecycle. The platform provides tools that identify vulnerabilities in AI pipelines, monitor model behavior, and detect threats affecting deployed AI systems. It helps organizations secure AI models, training pipelines, and AI applications before and after deployment.

Key capabilities

- Security scanning for AI models and artifacts

- Red-teaming and vulnerability testing for AI systems

- Monitoring of AI models in production environments

Best for: Organizations building or deploying machine learning models.

7. HiddenLayer

HiddenLayer is an AI security platform focused on protecting machine learning models and AI systems from emerging threats. The platform provides runtime monitoring for deployed models and detects attacks such as model extraction, adversarial inputs, and inference manipulation. HiddenLayer also offers security assessments to identify vulnerabilities in AI systems before deployment.

Key capabilities

- Runtime protection for AI models

- Detection of adversarial and model extraction attacks

- Security assessments for AI and ML systems

Best for: Organizations deploying AI models in production environments.

8. Lakera Guard

Lakera Guard is a security platform designed to protect generative AI applications and AI agents from prompt-based attacks. The platform analyzes prompts and responses in real time to detect threats such as prompt injection, jailbreak attempts, and sensitive data leakage. It integrates with LLM applications to enforce security policies and block unsafe inputs or outputs.

Key capabilities

- Prompt injection detection and prevention

- Real-time monitoring of LLM prompts and responses

- Data leakage detection in generative AI systems

Best for: Organizations building LLM applications and AI agents.

AI Agent Security Tools Comparison Overview

The table below summarizes how the leading AI agent security tools compare across their primary security focus, core capabilities, and ideal use cases:

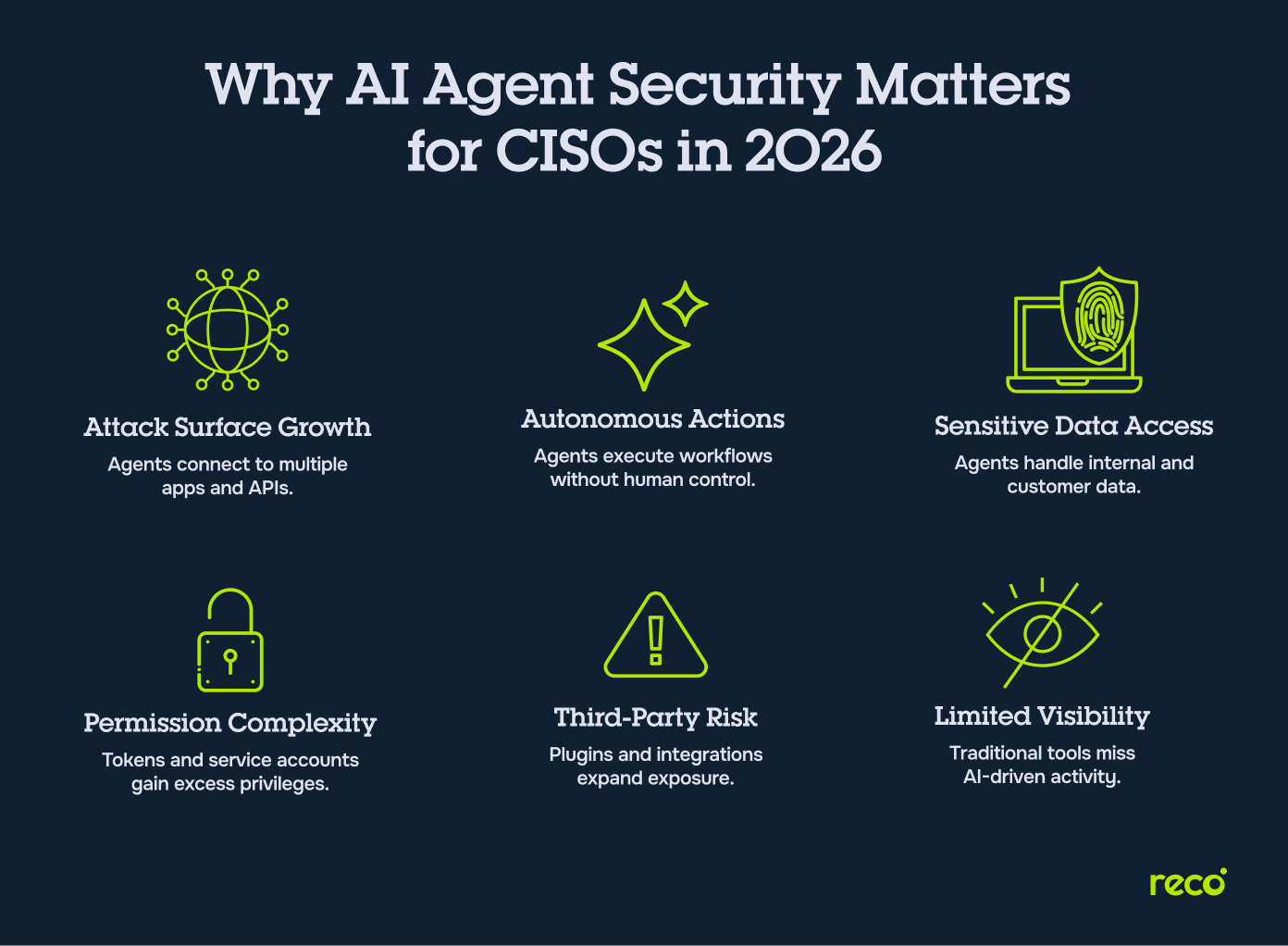

Why AI Agent Security Is a Critical Priority for CISOs in 2026

AI agents are creating a new security layer that extends beyond traditional applications and infrastructure. CISOs need visibility into how these systems access data, interact with enterprise tools, and execute actions across environments. Key risks include:

- Expanded Attack Surface: AI agents interact with multiple SaaS applications, APIs, and services, increasing potential entry points for attackers.

- Autonomous Actions Across Systems: Agents can trigger workflows and execute tasks across applications without direct human supervision.

- Access to Sensitive Enterprise Data: Many AI agents retrieve or process internal documents, customer information, and operational data.

- Complex Identity and Permission Structures: Agents often operate using service accounts, tokens, or delegated permissions that may accumulate excessive privileges.

- Third-Party Integrations and Plugins: External tools and integrations can introduce additional risks if they access sensitive systems or data.

- Limited Visibility Into AI-Driven Activity: Traditional security tools often lack visibility into how AI agents interact with enterprise environments.

Key Features CISOs Should Look For in AI Agent Security Tools

Securing AI agents requires visibility into how they access data, interact with applications, and execute automated tasks. The following capabilities help security teams monitor activity and enforce governance across AI-driven workflows.

Discovery of AI Agents and Autonomous Workflows

Security teams must first understand where AI agents operate across the organization. Effective tools should automatically discover AI-powered applications, autonomous workflows, and integrations running across SaaS platforms and cloud environments. This discovery helps identify shadow AI deployments, undocumented automation, and external AI services that may have access to enterprise systems.

Monitoring AI Agent Access to SaaS Applications and APIs

AI agents often interact with enterprise systems through SaaS applications and APIs. Security platforms should monitor how agents authenticate, which services they access, and what actions they perform. Continuous monitoring helps detect unusual activity patterns, unauthorized API usage, or automated workflows that may introduce security risks.

Identity and Access Governance for AI Agents

AI agents frequently operate using service accounts, tokens, or delegated permissions. Without proper governance, these identities may accumulate excessive privileges across multiple systems. AI agent security platforms should track and manage these identities, ensuring that agents operate with least-privilege access and that permissions remain aligned with their intended roles.

Detection of Risky AI Agent Behavior and Privilege Escalation

Autonomous agents can trigger multiple actions across connected systems, making behavioral monitoring essential. AI security platforms should analyze agent activity to detect abnormal behavior, suspicious access requests, or unexpected workflow execution. Early detection allows security teams to investigate and contain potential security incidents.

Visibility Into Third-Party AI Integrations and Data Exposure

Many AI agents rely on external tools, plugins, or integrations to complete tasks. These connections can introduce additional risks if they access sensitive systems or enterprise data. Security tools should provide visibility into these integrations and help organizations understand how data flows between AI agents and connected services.

Policy Enforcement for AI Agent Actions and Workflows

Organizations must be able to define and enforce policies governing how AI agents interact with enterprise systems. Security platforms should allow teams to control which resources agents can access and what actions they are permitted to perform. Policy enforcement helps prevent agents from executing unauthorized tasks or interacting with sensitive systems outside their intended scope.

Compliance Monitoring for AI Governance Frameworks

As AI adoption grows, organizations must maintain oversight and governance over how AI systems operate. Security platforms should support audit logging, monitoring of AI activity, and reporting capabilities that align with internal policies and emerging AI governance frameworks. This visibility helps CISOs demonstrate accountability and maintain compliance as AI systems become more integrated into enterprise operations.

How to Evaluate AI Agent Security Tools for Your Organization

Organizations should evaluate AI agent security platforms based on visibility, identity governance, monitoring capabilities, and integration with existing security infrastructure.

Conclusion

As AI adoption accelerates, organizations are moving toward systems where software can make decisions, trigger workflows, and interact with enterprise data with minimal human oversight. This shift requires CISOs to rethink how security controls are applied across SaaS platforms, APIs, and automated processes. Instead of focusing only on applications and infrastructure, security teams must also monitor the identities, permissions, and actions of autonomous systems operating within the environment.

The right AI agent security tools provide the visibility and governance needed to manage this new layer of automation. For CISOs building modern security programs, securing agent-driven activity will become an essential component of enterprise risk management.

How are AI agent security tools different from traditional AI security platforms?

Traditional AI security platforms focus primarily on protecting machine learning models and AI pipelines during development and deployment. AI agent security tools expand this scope by monitoring how autonomous systems interact with enterprise environments after they are deployed.

Key differences include:

- Monitoring autonomous actions across SaaS applications, APIs, and internal systems

- Tracking identities used by AI agents, including service accounts and delegated credentials

- Detecting risky integrations and workflows created by automated agents

- Enforcing policies governing what actions AI agents can perform

As AI adoption grows, security teams increasingly need tools that monitor how AI systems operate in real environments, not just how models are built.

What security risks do AI agents introduce for enterprise environments?

AI agents introduce new risks because they can automatically interact with applications, data sources, and services across multiple systems. These interactions may occur without direct human oversight.

Common risks include:

- Excessive permissions granted to AI agents or service accounts

- Unauthorized access to sensitive data through APIs or integrations

- Unmonitored third-party tools and plugins connected to AI workflows

- Automated workflows executing unintended actions across enterprise systems

- Limited visibility into how AI agents interact with SaaS environments

Security platforms that provide application discovery and visibility into connected services help organizations identify these risks early. For example, solutions that support SaaS application discovery allow security teams to understand which applications and integrations AI agents interact with.

Can AI agent security tools monitor both internal and third-party AI agents?

Yes. Modern AI security platforms are designed to monitor activity across both internally developed AI agents and third-party AI services.

These tools typically monitor:

- AI agents built internally by engineering teams

- Third-party AI assistants integrated into SaaS platforms

- External AI services connected through APIs

- Automated workflows that use AI models or LLMs

Monitoring both types of agents is essential because many organizations adopt AI through external tools and SaaS integrations before developing their own AI systems. Platforms that support continuous monitoring of SaaS environments and integrations make it easier to identify how AI tools interact with enterprise systems.

How does Reco help CISOs manage identity and access risks introduced by AI agents?

Reco helps CISOs manage identity risks by providing visibility into how identities interact with SaaS applications, APIs, and integrations. AI agents often operate using service accounts, tokens, or delegated permissions, which can accumulate excessive privileges over time.

Reco helps security teams address these risks by:

- Tracking identities across SaaS applications and integrations

- Identifying excessive permissions and risky access patterns

- Detecting suspicious identity activity across connected systems

- Enforcing identity governance policies

These capabilities are supported through Reco’s Identity and Access Governance platform, which helps organizations manage permissions, monitor identity activity, and reduce identity-related security risks across SaaS environments.

How does Reco detect risky AI integrations across SaaS environments?

AI agents frequently rely on SaaS integrations and external services to complete tasks. These integrations can introduce security risks if they access sensitive data or operate with excessive permissions.

Reco detects risky integrations by:

- Automatically discovering connected SaaS applications and integrations

- Monitoring how integrations access enterprise data

- Identifying suspicious or high-risk automation workflows

- Detecting abnormal activity patterns across SaaS environments

These capabilities are supported through Reco’s SaaS Posture Management and Compliance platform, which continuously analyzes SaaS environments to identify misconfigurations, risky integrations, and policy violations.

%201.svg)

.svg)