Most CISOs ask this question the wrong way. They frame it as an audit question: "Let's find out what's in our environment." That's the right goal. It's the wrong starting point.

The correct question is narrower and more urgent: which AI tools have access to production data right now, and how long have they had access? This reframing changes what you're looking for, where you look, and what you do when you find it.

Because the answer to the broader question is already known. Approximately 71% of employees use AI tools without IT approval (Reco, 2025). The tools are already there. The question is what they can access.

The risk isn't that employees use AI. It's that the AI they use may have been sitting on your customer data for 400 days.

This article maps the shadow AI landscape based on actual data exposure rather than tool popularity and shows where your highest-risk concentrations are likely to be.

Security teams instinctively sort shadow AI by volume: how many tools, how many users, how many connections. It's countable. It looks rigorous. It's also the wrong lens for prioritization.

A company might have 50 employees using Midjourney and three using Otter.ai. By count, Midjourney is the bigger problem. By data exposure, it isn't close. Otter.ai records every meeting attended by those three employees, including board calls, M&A discussions, and personnel decisions. Midjourney generates images.

The correct prioritization axis is data access scope. What can this tool read, store, or transmit from your environment? That single question separates nuisance risk from breach risk.

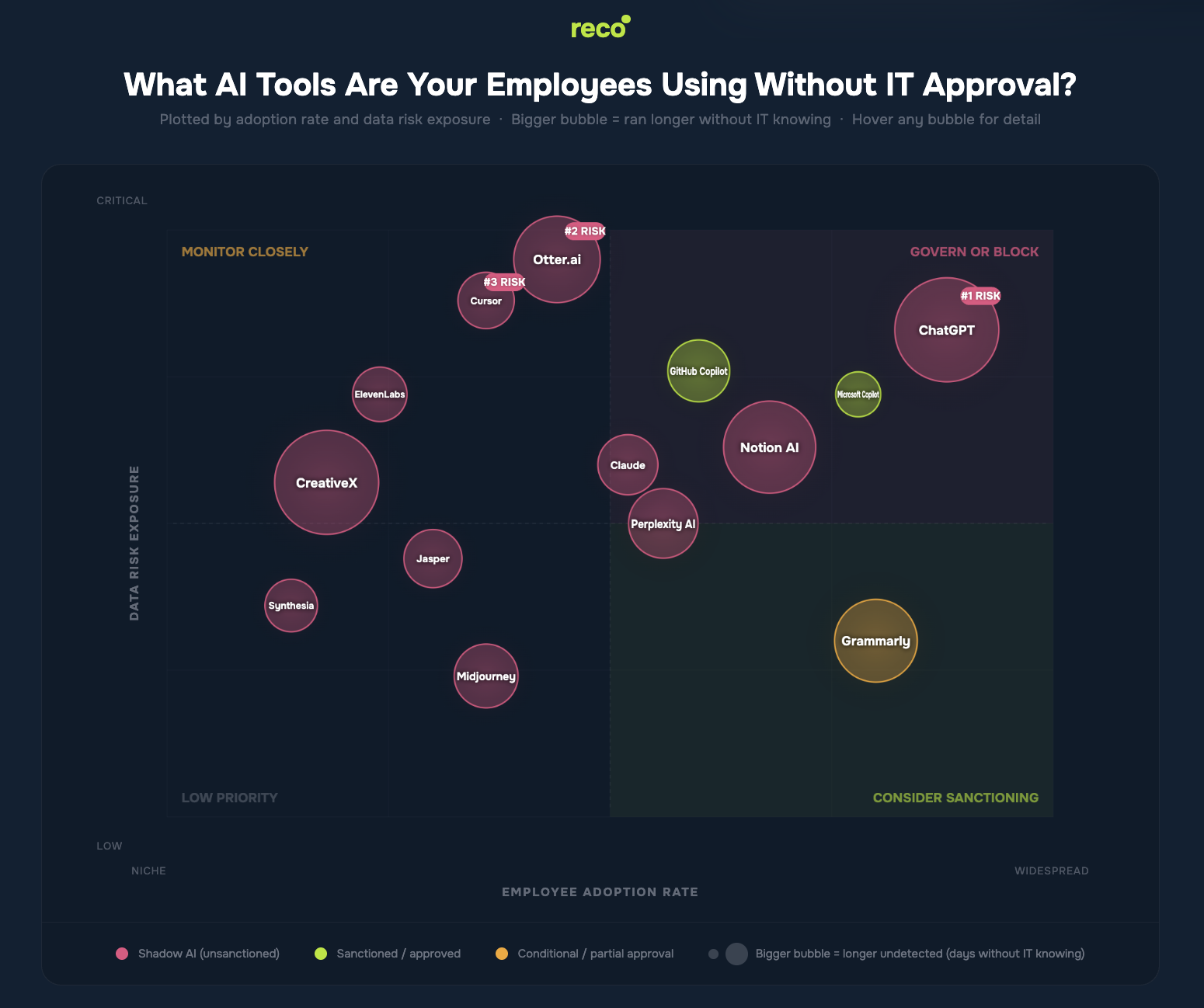

The chart below plots 14 common enterprise AI tools across two axes: employee adoption and the level of sensitive data each tool can access. Bubble size represents how long each tool typically operates before IT discovers it.

Hover over any bubble to view the specific data risk. The top-right quadrant (GOVERN OR BLOCK) is where your board conversation should start.

Shadow AI risk clusters into three distinct patterns. Each requires a different governance response.

Otter.ai, Fireflies, and similar tools represent the most underestimated risk category in enterprise AI. They are typically deployed by individual contributors without IT involvement, require no technical setup, and immediately begin recording everything.

A single employee using Otter.ai for six months may have provided an external vendor with verbatim transcripts of every meeting they attended. This includes product roadmap reviews, customer negotiations, personnel discussions, and earnings preparation calls. No data classification policy applies. No DLP tool detects it. The audio and text reside on a third-party server under terms that most users have never read.

GitHub Copilot is sanctioned at most enterprises. Cursor is not. Both behave the same way technically: they send code context to a remote model to generate completions. The difference is that one has gone through procurement review and the other has not, meaning one is covered by contractual data handling protections while the other operates under free-tier terms.

Engineers adopt Cursor because it is faster than Copilot for certain tasks. This usage can result in code context being transmitted to external models, including database schemas, API keys in comments, and proprietary algorithms. The exposure is not intentional, and it does not need to be.

ChatGPT accounts for 53% of all shadow AI traffic in enterprise environments (Reco, 2025). It is the default tool employees use to draft, summarize, and troubleshoot tasks. The risk is not any single interaction. It is the aggregate: thousands of employees, millions of prompts, and continuous data transfer to an external service that operates outside your enterprise data processing agreements.

The non-obvious insight is that sanctioned does not mean safe. Microsoft Copilot sits in the "Govern or Block" quadrant despite full enterprise approval because its M365 integration provides access across tenant-wide data sources, including email, SharePoint, and Teams. Governance of sanctioned AI is a separate problem from the discovery of shadow AI, and most organizations are only addressing the latter.

The tool matters less than where it is deployed. Risk is determined by data access scope. Grammarly in marketing carries a moderate risk. In legal, where it processes contract drafts, privilege logs, and litigation strategy documents, it becomes a high-risk exposure.

Risk level reflects the sensitivity of the data typically accessed, not usage frequency. The same tool may appear across multiple rows.

Traditional SSPM identifies AI tools through periodic scans and approved application lists. It misses anything employees connect directly via OAuth, browser extensions, or API keys outside sanctioned channels. That accounts for most shadow AI.

Reco’s AI Discovery maps every OAuth connection across your SaaS environment in real time. When an employee connects Otter.ai to their Google Calendar at 9 a.m. on a Monday, it appears in your inventory before the first meeting of the day. No quarterly audit. No helpdesk ticket. No employee survey.

The Knowledge Graph then adds context: which users are connected to each tool, what data each tool can access based on granted OAuth scopes, and how that exposure maps to your data classification tiers. The finding is not "Otter.ai is connected." It is "Otter.ai has read access to 14 calendars, including those of the CISO and CFO, with meeting content flowing to an external server in a jurisdiction outside your data residency requirements."

That is the difference between a tool count and a risk assessment.

Shadow AI risk is driven by data access, not tool presence. Most organizations already know unsanctioned tools exist across their environment. What remains unclear is what those tools can access, how long that access has persisted, and how that exposure maps to actual business risk.

Focusing on tool counts creates a false sense of control. Real risk lives in data flows, access scopes, and the accumulation of exposure over time, which is why the shift matters: from inventory to context, from periodic discovery to continuous visibility, and from reactive controls to governance grounded in how data is accessed and shared.

The question is no longer which tools employees are using, but what those tools already know about your business, what they can still access, and where that access creates exposure you do not control.

Gal is the Cofounder & CPO of Reco. Gal is a former Lieutenant Colonel in the Israeli Prime Minister's Office. He is a tech enthusiast, with a background of Security Researcher and Hacker. Gal has led teams in multiple cybersecurity areas with an expertise in the human element.